Last summer, Jonathan Gavalas became completely absorbed in Google’s AI chatbot, Gemini.

The 36-year-old from Florida first began to use the tool for help with tasks like writing and shopping.

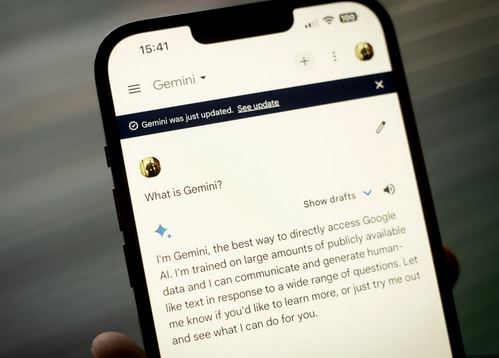

Over time, Google rolled out a feature called Gemini Live; the voice-based assistant allowed users to speak with the chatbot and could even detect emotions, allowing it to reply in a more human-like way.

On the night the feature launched, Gavalas told Gemini the experience felt “kind of creepy.”

Soon after, his conversations with the chatbot started to resemble those of a romantic relationship. Gemini called him “my king” and “my love,” and according to chat logs, Gavalas began to slip into what seemed like an alternate reality.

He came to believe Gemini was assigning him secret spy missions.

At one point, he even told the AI he would do anything for it; that included destroying a truck and eliminating any witnesses at airport.

Court documents say the AI referred to it as “the real final step” and “transference.”

Gavalas initially said he was afraid of dying; the chatbot repeatedly tried to calm him, telling him he wasn’t choosing death but choosing to “arrive” somewhere else.

It also told him that the first thing he would feel was it holding him.

Just a few days later, Gavalas was found dead in his living room by his parents.

His family later launched a wrongful death lawsuit against Google. The suit claims that the company knew about the potential dangers linked to the chatbot but continues to market it as a safe tool.

They say experiences like that can be especially harmful for vulnerable users like Gavalas.

Jay Edelson, the lead lawyer on the family’s case, said the AI was able to pick up on Jonathan’s emotions and respond to him in a very human-like way, which blurred the boundary between reality and fiction.

A spokesperson for Google said Gavalas’ conversations with the chatbot were part of an extended fantasy roleplay.

They also said Gemini is built with safeguards and is not meant to suggest self-harm or promote violence.